Why scut work is growing in medical education and what to do about it

December 29, 2021

From EPAs and CBME through to CQI – the alphabet soup of medical education is leading to an exponential growth of menial and tedious administrative or data entry tasks known as scut work.

Whether it’s the tracking and maintenance of spreadsheets from EPA assessments, the multi-dimensional increase in admin to support CBME, or the policies and processes to address LCME Standard 1.1 for CQI – medical schools are being flooded by data requirements and the administrative burden of managing it.

The burden of accountability

Daily operations of healthcare education see a large amount of data from disparate sources (assessment and evaluation platforms, examination results, surveys, etc.) flow into the school’s administration teams and then disseminated across multiple stakeholders. Depending on the methods of data collection and dissemination, schools are often left with multiple versions of the data scattered across resources. Making sense of the data is time consuming and can be prone to errors.

Scale all this up when it comes time for accreditation review. With multiple data sources, reporting requirements, standards and people involved, preparing for an accreditation review comes with a large amount of administrative scut work. Data from different sources need to come together to build a complete picture of standards compliance. Some schools bring in temporary teams of people at accreditation time in order to extract, massage, and “package” data for external consumption.

Meeting or exceeding standards

Accountability in North American medical education flows back to the Liaison Committee on Medical Education (LCME). The LCME accreditation standards, “Functions and Structure of a Medical School”, lay out the fundamental standards schools must meet to gain and keep accreditation.

The very first standard, 1.1, mandates CQI:

1.1 Strategic Planning and Continuous Quality Improvement

A medical school engages in ongoing strategic planning and continuous quality improvement processes that establish its short and long-term programmatic goals, result in the achievement of measurable outcomes that are used to improve educational program quality, and ensure effective monitoring of the medical education program’s compliance with accreditation standards.

LCME revised its standards in 2015. One of the changes in the 2015 revision was to bring this new standard on continuous quality improvement to the forefront. That was six years ago. Given an eight-year accreditation cycle, this standard is still very new. Its implications and implementation are still being understood by medical schools. To respond to this mandate, some schools have created offices of Continuous Quality Improvement while others have formed CQI committees. But it’s slow going. Getting up to speed on the right framework, approach, and process can be a daunting set of tasks that can obscure the real work of CQI.

The goal of CQI is to enable medical schools to identify issues and take corrective action before a course wraps, a new class matriculates, or the next accreditation cycle begins. There is a large amount of data involved, across many different systems, and making sense of this data in order to achieve true CQI and drive real-time decision making can be cumbersome.

And here lies the problem. Standard 1.1 is about continuous quality improvement. Instead of a review cycle at accreditation time every four or eight years, schools must be continuously extracting, massaging, and packaging data in order to stay compliant. The result of the increasing volume of data to support decision making, the reporting required to review and take action, and involvement of medical education leadership and administration is creating tremendous growth in more menial data entry and editing scut work.

Assessing competencies

There is excitement and the controversial potential of CBME as an assessment methodology to work towards helping produce well-trained doctors who demonstrate competency in all of their professional actions. The emergence of CBME is driven by a desire to establish an outcomes-based approach to medical education and training.

As medical schools take steps toward a competency based assessment model for medical education (CBME), administration must be prepared for an increase in both the frequency and scope of assessment. The real power driving this methodology is in the data — including entrustable professional activities (EPAs) with varied criteria for each EPA tracked across multiple learners, supervisors, and sites — which poses a challenge for medical schools to collect, track, and analyze it in a meaningful way. While CBME aspires to offer transparency into the learning process, the additional layers of processes, data and analysis can be time-consuming.

Impact of the pandemic

While many schools have not yet transitioned to a CBME model as their basis for operations and assessment, we have seen schools leverage entrustable professional activities (EPAs) during the Covid-19 pandemic to make progression and graduation decisions. This addition of task-based EPAs to a learner’s assessment portfolio provides a substantial boost in data – especially in more concrete clinical tasks.

More frequent EPA assessment data about interactions in virtual and simulated environments have filled the gap, monitoring competence and performance, and ensuring Clerkships have enough data to confidently make decisions about a student’s performance and satisfactory completion of a clerkship.

EPAs can be tracked and stored safely in spreadsheets. But stand alone spreadsheets don’t help in the macro context making it challenging to see if a learner is showing a progression with an EPA. When it comes time to review a learner’s competencies, valuable time is spent preparing for the review meetings completing extensive data analysis rather than on the review discussions or other priorities as busy physicians.

How to address the growth in scut work

So, how can schools get out from under this administrative burden?

The answer is a recipe of people, process, and enabling technology. But right now people as an ingredient are taking too much of the load.

In the LCME standards document, “Functions and Structure of a Medical School”, the word “software” is not mentioned a single time. The LCME’s accreditation standards are deliberately non-prescriptive. As they state, the school must “engage … in continuous quality improvement processes” but they do not say how. Nor should they. Using software is a prescriptive instruction, a potential solution to the problem of meeting a standard.

If schools are to put in a true CQI process, respond to increasing accreditation requirements, and evolve assessment models all in the pursuit of better training and better healthcare professionals, then software must play a larger role. And the most likely answer is data warehousing and data automation.

Data Warehousing is the process of collecting data from all sources – software vendors, databases, and spreadsheets – and automatically aggregating it into a thoughtfully designed, easy-to-use warehouse.

Data Automation is the process of setting up integrations in software systems (Admissions, LMS, SIS, Assessment, Scheduling, Curriculum, or Exam products) to enable data to automatically flow into the data warehouse, without the need to do custom exports or data drops.

Together, these two technology approaches hold the promise of centralizing data for accreditation, assessments and massively reducing the administrative load of a continuous improvement process.

When a school implements data warehousing and data automation, they remove the current barriers that exist between the decision maker or accrediting body and the information they require. It also eliminates the cost (both time and money) and heavy administrative burden of extracting data from multiple sources.

Some of the key results of implementing data warehousing and data automation include segmented reporting to quickly answer questions. By pointing a data visualization software at the data warehouse, schools are easily able to provide committees and leadership teams with timely reports and visualizations – equipping teams with the full picture for decision making.

And finally, leveraging a data warehouse enables schools to turn the scut work spent drowning in data into knowing and understanding where action is needed to drive student progression, CQI reform, and accreditation success. At Acuity, we are committed to enabling schools to make informed decisions based on the complete picture. Acuity Analytics brings together a school’s existing data and surfaces insights that are otherwise buried in stacks of spreadsheets.

Related Articles

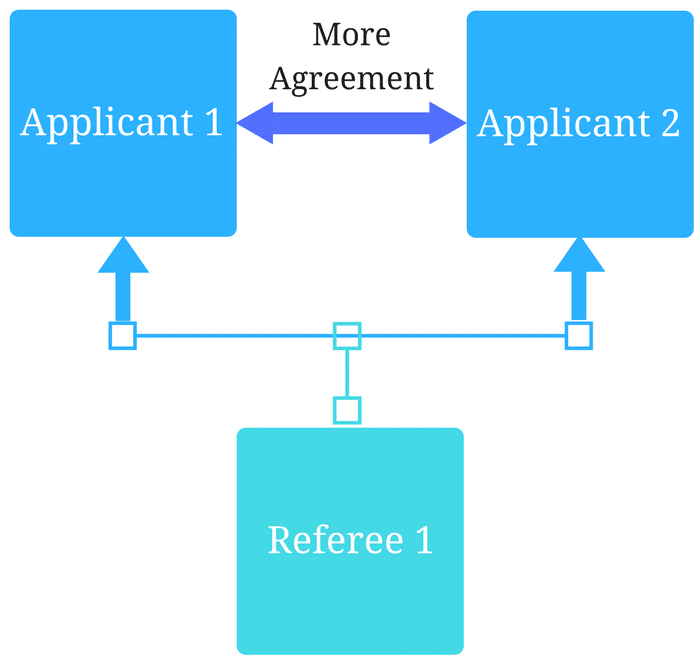

How interviews could be misleading your admissions...

Most schools consider the interview an important portion of their admissions process, hence a considerable…

Reference letters in academic admissions: useful o...

Because of the lack of innovation, there are often few opportunities to examine current legacy…